Imagine you run home from the office, highly frustrated and angry with your day’s work. But as soon as you enter the home, you have a personal friend in the form of a digital virtual assistant which can automatically sense your current mood and order your favorite food, dim the lights and soothe you to sleep while you listen to your favorite music. Sounds like a dream. But this dream is soon to be a reality.

Numerous companies are working on building these virtual friends for us. To name a few, we have Siri by Apple, Alexa by Amazon, Cortana by Microsoft, Samsung’s Bixby etc. Every company is striving to get ahead of the others when it comes to these virtual assistants. Owing to this large-scale competition, we now have these little talking bots that do wonders for us- hotel/restaurant reservations, controlling home automation devices, managing to-do lists, navigation etc.

But are we still there where we can actually call them a friend? Imagine having a virtual assistant like the one shown in the movie “Her”, which can understand the deeper contexts inside your daily conversations and provide more human-like interactions rather than a monotonously programmed robotic conversation. This is possible with the use of Emotional Artificial Intelligence (AI).

Empathetic technology is pushing its limits into our daily lives with companies thriving to provide better human-machine interactions that take into account the emotional or empathetic context of a user. Affectiva’s Emotion API, IBM’s Watson Tone Analyzer, Empath’s Vocal Emotion Recognition API are a few such products that offer emotion recognition by analyzing speech para-linguistics, tone and other characteristics of the speaker.

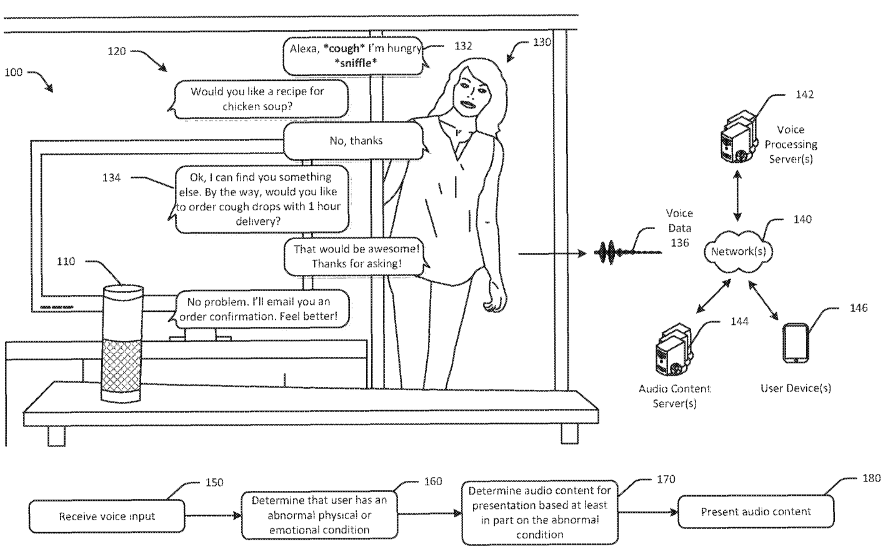

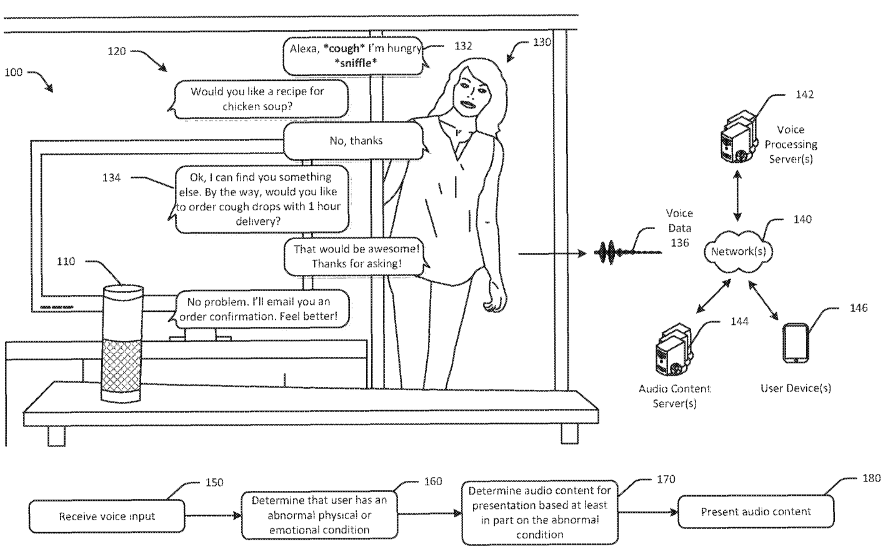

A look at recent patent filings from some major companies hints at the upcoming emotionally intelligent assistants. Patent US 10,096,319 filed by Amazon hints at adding emotional capability into Alexa. According to the patent, the system would be able to determine the voice-based physical and emotional characteristics of the speaker and thereby present emotionally relevant content to the user.

Similarly, a patent filed by Google US2014280296A1, mentions a system to provide helpful information based on emotion detection of the user of a device. The system detects the emotion of the user while interacting with the device and provides further assistance based on the recognized emotions. Another patent by Google US20160260135A1 mentions selecting at least one content item that is relevant to the user based on his mood. These patents can be a hint at Google trying to implement similar technology in its virtual assistant.

Another patent US20170123825A1 filed by Microsoft hints at incorporating Emotionally Connected Responses in its virtual assistant Cortana.

Huawei also plans to add the emotion-sensing capability to its virtual assistant. The aim is to develop the assistant to drive more emotionally relevant conversations.

Following are some potential use cases of virtual assistants that are driven by emotional intelligence:

- Adding personalization to conversations: The future chatbots will be able to recognize your current mood and talk to you more realistically by varying their tone itself.

- More helpful recommendations by analyzing behavior: Imagine you are exhausted from day’s work. You get home and wake up your chatbot. The intelligent bot immediately recognizes your current state by analyzing your emotion and automatically dims the lights and plays soothing music from your favourite list.

- Better customer interactions and targeting: When employed for online shopping, chatbots can offer better interactions by analyzing the mood of a customer after a recent purchase- frustrated, angry, happy.

- Detecting road rage: While you are driving the personal assistant in your car can easily detect when you feel angry or frustrated and accordingly take action to prevent you from driving recklessly.

Further, it would be interesting to see what other changes these emotionally intelligent chatbots can bring to our lives.